February 7, 2026

Should AI decide whether you live or die?

A controversial suicide pod known as Sarco could soon rely on artificial intelligence (AI) to decide who is allowed to die, forcing a global reckoning over whether a machine should ever make irreversible end-of-life decisions.

The Sarco capsule, which was developed by Australian euthanasia campaigner Philip Nitschke, looks like something from a design exhibition at first, in that it’s sleek, minimalist, and almost serene.

It is also a suicide device.

Sarco is essentially a 3D-printed pod that delivers nitrogen gas, causing hypoxia and death within minutes. Nitschke describes it as a dignified, medical-free way to die free from doctors, prescriptions, and institutional oversight.

It’s not the pod itself that incites controversy, though.

Nitschke has proposed that a conversational AI avatar would determine whether a person has the mental capacity to make an informed decision to end their life. If the system approves it, the pod activates.

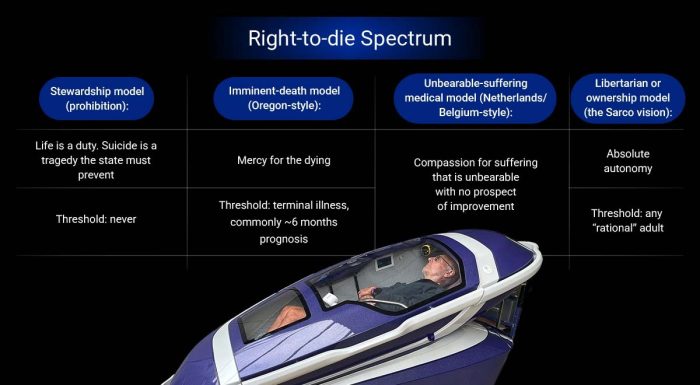

Cybernews spoke to Dr. Jenny Shields, a clinical health psychologist and bioethicist based in The Woodlands, Texas, about the limits of AI, and she first mentioned four ethical capacities, the so-called “right-to-die spectrum,” which ranges from complete prohibition to an absolute autonomy for a person to make their own decision.

According to Shields, most legal systems are somewhere in between, while Nitschke is proposing to automate the fourth one, the libertarian or ownership model – which is to give decision-making power to the Sarco pod.

Shields said that the path that AI picked would likely depend on the moral framework encoded into the system.

“If the system is built to privilege autonomy over protection, it will predictably authorize death more often. If it is built around stewardship, it will predictably deny,” she said.

The clinical practice of a suicide pod

In real clinical practice, mental capacity is not fixed. It fluctuates with pain, medication, exhaustion, fear, and social pressure. Assessments are ongoing and are rarely one-off conversations.

They unfold over time, sometimes across multiple encounters, with different professionals comparing impressions, relying heavily on context.

A human practitioner notices hesitation, possible intoxication, inconsistencies, and subtle forms of coercion such as fear of medical debt. Visits include nuances that go unspoken.

It can be a case of events happening outside the clinician’s room, too – like family conflicts – that are the pivotal factors in deciding whether euthanasia is approved or not. And AI is sorely lacking in this domain.

According to Shields, AI is context-blind: “It cannot reliably detect coercion happening off-camera. It cannot smell alcohol. It cannot notice quiet intimidation. It cannot reliably tell the difference between wisdom and a rehearsed script.”

In other words, the technology can standardize questions, but it cannot reliably read the room. “In life and death decisions, the room matters,” Shields said.

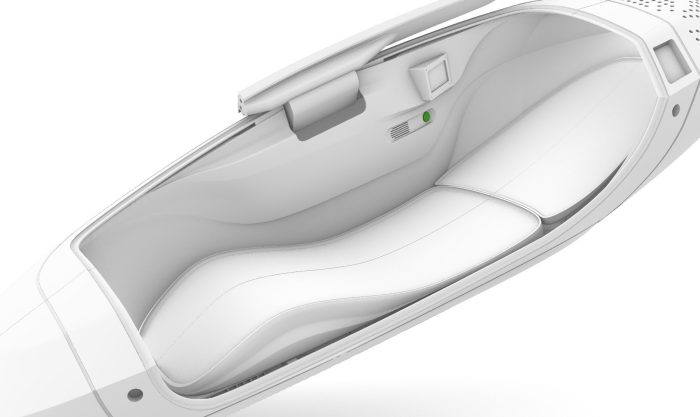

Sarco interior

Errors that cannot be undone

Assisted dying also offers zero margin for error. For example, when AI is used to give an opinion on prescribing medicine, a general (human) practitioner can cross-reference and even overrule the system.

But if, as in the case of Sarco, an algorithmic decision from AI is the final gatekeeper with no error bug or feedback generated from the receiving end, things start to look morally catastrophic.

As Shields explains, “In this setting, an error rate is not a statistic. It is bodies. You cannot patch software after someone is dead.”

If a clinician makes a mistake, their actions may lead to an investigation, and they may be held legally accountable.

Using a system like Sarco repositions assisted dying as a process governed by predefined checks, rather than a series of discretionary clinical decisions.

Who gets control of the gate?

The creator of the suicide pod, Philip Nitschke, was the first practitioner to administer a voluntary lethal injection to a willing patient in 1996.

The patient had prostate cancer and was able to press the button on the side of the bed of his own volition.

His Sarco machine reflects a radical departure from those days, presenting a model of death with minimal friction.

However, as Shields points out, when there is disagreement between doctor and patient, resistance can be an important intervention, as Shields revealed:

A human conversation has friction. People love to mock friction, but friction is often the safety feature. It is where ambivalence shows up. It is where someone finally says – I do not actually want to be dead, I just want this to stop.

The problem is that AI cannot be morally neutral. Any system that assesses capacity must prioritise one set of values over another, whether autonomy over protection, or access over stewardship, for example.

That is why the Sarco debate matters beyond one machine.

Beyond technological capability, it remains a question of whether society is willing to give software ultimate authority over human life.

As Shields concludes: “This isn’t really about whether an AI can assess capacity. It’s about whether we are comfortable giving software root access to a human life.”

Exit